Percentage Within 1 Standard Deviation

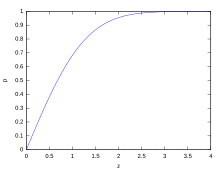

Cumulative probability of a normal distribution with expected value 0 and standard divergence 1

In statistics, the standard deviation is a measure of the amount of variation or dispersion of a set of values.[ane] A low standard deviation indicates that the values tend to be close to the mean (also called the expected value) of the set up, while a high standard deviation indicates that the values are spread out over a wider range.

Standard difference may be abbreviated SD, and is nigh commonly represented in mathematical texts and equations by the lower case Greek letter of the alphabet σ (sigma), for the population standard deviation, or the Latin letter s , for the sample standard difference.

The standard deviation of a random variable, sample, statistical population, information ready, or probability distribution is the foursquare root of its variance. It is algebraically simpler, though in practice less robust, than the average accented divergence.[two] [3] A useful holding of the standard departure is that, unlike the variance, information technology is expressed in the same unit as the data.

The standard deviation of a population or sample and the standard error of a statistic (e.g., of the sample mean) are quite different, just related. The sample mean's standard mistake is the standard difference of the ready of means that would be institute by cartoon an infinite number of repeated samples from the population and computing a mean for each sample. The mean's standard error turns out to equal the population standard divergence divided by the square root of the sample size, and is estimated by using the sample standard divergence divided past the foursquare root of the sample size. For example, a poll'south standard error (what is reported as the margin of error of the poll), is the expected standard divergence of the estimated mean if the aforementioned poll were to be conducted multiple times. Thus, the standard fault estimates the standard deviation of an gauge, which itself measures how much the estimate depends on the item sample that was taken from the population.

In science, it is common to report both the standard deviation of the data (every bit a summary statistic) and the standard error of the guess (equally a mensurate of potential error in the findings). By convention, only furnishings more than two standard errors away from a nada expectation are considered "statistically pregnant", a safeguard against spurious conclusion that is really due to random sampling error.

When merely a sample of data from a population is bachelor, the term standard deviation of the sample or sample standard divergence tin can refer to either the higher up-mentioned quantity every bit applied to those data, or to a modified quantity that is an unbiased estimate of the population standard deviation (the standard departure of the entire population).

Basic examples [edit]

Population standard deviation of grades of eight students [edit]

Suppose that the entire population of interest is eight students in a item class. For a finite prepare of numbers, the population standard deviation is constitute by taking the square root of the average of the squared deviations of the values subtracted from their boilerplate value. The marks of a course of eight students (that is, a statistical population) are the following eight values:

These eight information points take the mean (boilerplate) of 5:

First, summate the deviations of each data point from the mean, and square the effect of each:

The variance is the mean of these values:

and the population standard deviation is equal to the square root of the variance:

This formula is valid only if the eight values with which we began class the complete population. If the values instead were a random sample fatigued from some large parent population (for example, they were 8 students randomly and independently chosen from a class of 2 million), then one divides by 7 (which is n − 1) instead of 8 (which is due north) in the denominator of the last formula, and the upshot is In that case, the result of the original formula would be called the sample standard deviation and denoted past south instead of Dividing by due north − one rather than by n gives an unbiased judge of the variance of the larger parent population. This is known as Bessel's correction.[four] [5] Roughly, the reason for it is that the formula for the sample variance relies on computing differences of observations from the sample hateful, and the sample hateful itself was synthetic to be every bit close as possible to the observations, so just dividing past n would underestimate the variability.

Standard deviation of average acme for adult men [edit]

If the population of interest is approximately normally distributed, the standard divergence provides information on the proportion of observations above or below certain values. For instance, the boilerplate height for adult men in the U.s. is about 70 inches, with a standard deviation of around three inches. This means that most men (nearly 68%, assuming a normal distribution) have a height within 3 inches of the mean (67–73 inches) – one standard difference – and almost all men (virtually 95%) have a acme within half-dozen inches of the hateful (64–76 inches) – two standard deviations. If the standard departure were zero, then all men would exist exactly 70 inches tall. If the standard departure were xx inches, then men would take much more variable heights, with a typical range of nigh l–xc inches. Three standard deviations account for 99.7% of the sample population beingness studied, assuming the distribution is normal or bell-shaped (come across the 68–95–99.7 rule, or the empirical rule, for more information).

Definition of population values [edit]

Let μ be the expected value (the average) of random variable Ten with density f(x):

The standard deviation σ of Ten is divers as

which can be shown to equal

Using words, the standard deviation is the square root of the variance of X.

The standard deviation of a probability distribution is the same equally that of a random variable having that distribution.

Not all random variables accept a standard departure. If the distribution has fat tails going out to infinity, the standard deviation might non exist, because the integral might non converge. The normal distribution has tails going out to infinity, only its mean and standard difference do be, because the tails diminish apace enough. The Pareto distribution with parameter has a mean, but not a standard deviation (loosely speaking, the standard departure is infinite). The Cauchy distribution has neither a mean nor a standard deviation.

Discrete random variable [edit]

In the case where X takes random values from a finite information ready x 1, x ii, …, xN , with each value having the same probability, the standard difference is

or, by using summation note,

If, instead of having equal probabilities, the values accept unlike probabilities, allow ten 1 have probability p 1, x 2 have probability p ii, …, x N have probability p North . In this case, the standard deviation will be

Continuous random variable [edit]

The standard deviation of a continuous real-valued random variable X with probability density role p(10) is

and where the integrals are definite integrals taken for ten ranging over the set up of possible values of the random variableX.

In the case of a parametric family of distributions, the standard deviation tin can be expressed in terms of the parameters. For example, in the case of the log-normal distribution with parameters μ and σ 2, the standard difference is

Estimation [edit]

One can observe the standard divergence of an entire population in cases (such as standardized testing) where every member of a population is sampled. In cases where that cannot be done, the standard deviation σ is estimated past examining a random sample taken from the population and computing a statistic of the sample, which is used as an gauge of the population standard deviation. Such a statistic is chosen an reckoner, and the estimator (or the value of the estimator, namely the guess) is called a sample standard departure, and is denoted past s (possibly with modifiers).

Unlike in the case of estimating the population mean, for which the sample hateful is a simple estimator with many desirable properties (unbiased, efficient, maximum likelihood), there is no single calculator for the standard departure with all these properties, and unbiased estimation of standard deviation is a very technically involved problem. Most frequently, the standard difference is estimated using the corrected sample standard difference (using North − i), defined below, and this is often referred to every bit the "sample standard deviation", without qualifiers. Notwithstanding, other estimators are better in other respects: the uncorrected estimator (using N) yields lower hateful squared error, while using N − 1.5 (for the normal distribution) most completely eliminates bias.

Uncorrected sample standard difference [edit]

The formula for the population standard departure (of a finite population) tin can exist applied to the sample, using the size of the sample as the size of the population (though the bodily population size from which the sample is fatigued may be much larger). This estimator, denoted by s N , is known every bit the uncorrected sample standard difference, or sometimes the standard deviation of the sample (considered as the entire population), and is defined every bit follows:[6]

where are the observed values of the sample items, and is the mean value of these observations, while the denominatorN stands for the size of the sample: this is the foursquare root of the sample variance, which is the average of the squared deviations about the sample hateful.

This is a consistent estimator (it converges in probability to the population value every bit the number of samples goes to infinity), and is the maximum-likelihood estimate when the population is ordinarily distributed.[seven] Still, this is a biased estimator, as the estimates are mostly also low. The bias decreases as sample size grows, dropping off as 1/North, and thus is nearly significant for small or moderate sample sizes; for the bias is below 1%. Thus for very large sample sizes, the uncorrected sample standard deviation is by and large acceptable. This estimator also has a uniformly smaller mean squared error than the corrected sample standard divergence.

Corrected sample standard departure [edit]

If the biased sample variance (the second central moment of the sample, which is a downward-biased judge of the population variance) is used to compute an judge of the population's standard departure, the result is

Here taking the square root introduces further downwards bias, by Jensen'southward inequality, due to the square root's beingness a concave function. The bias in the variance is easily corrected, but the bias from the foursquare root is more than difficult to correct, and depends on the distribution in question.

An unbiased estimator for the variance is given by applying Bessel's correction, using N − one instead of N to yield the unbiased sample variance, denoted s 2:

This estimator is unbiased if the variance exists and the sample values are drawn independently with replacement. North − i corresponds to the number of degrees of freedom in the vector of deviations from the mean,

Taking foursquare roots reintroduces bias (because the foursquare root is a nonlinear function which does non commute with the expectation, i.e. often ), yielding the corrected sample standard difference, denoted by due south:

Every bit explained higher up, while south two is an unbiased computer for the population variance, south is still a biased estimator for the population standard departure, though markedly less biased than the uncorrected sample standard divergence. This estimator is commonly used and more often than not known merely as the "sample standard difference". The bias may nevertheless be large for minor samples (N less than 10). As sample size increases, the corporeality of bias decreases. We obtain more information and the departure between and becomes smaller.

Unbiased sample standard deviation [edit]

For unbiased estimation of standard difference, there is no formula that works across all distributions, unlike for hateful and variance. Instead, s is used as a ground, and is scaled past a correction factor to produce an unbiased gauge. For the normal distribution, an unbiased estimator is given past s/c iv, where the correction factor (which depends on N) is given in terms of the Gamma part, and equals:

This arises because the sampling distribution of the sample standard deviation follows a (scaled) chi distribution, and the correction cistron is the hateful of the chi distribution.

An approximation can be given by replacing Northward − 1 with Due north − 1.v, yielding:

The error in this approximation decays quadratically (as 1/Northward 2), and it is suited for all but the smallest samples or highest precision: for N = 3 the bias is equal to one.3%, and for N = ix the bias is already less than 0.one%.

A more accurate approximation is to supplant higher up with .[8]

For other distributions, the correct formula depends on the distribution, but a dominion of pollex is to use the farther refinement of the approximation:

where γ 2 denotes the population excess kurtosis. The excess kurtosis may exist either known beforehand for certain distributions, or estimated from the data.[ix]

Confidence interval of a sampled standard deviation [edit]

The standard deviation we obtain by sampling a distribution is itself not admittedly accurate, both for mathematical reasons (explained here by the confidence interval) and for applied reasons of measurement (measurement error). The mathematical effect can be described by the confidence interval or CI.

To evidence how a larger sample volition make the confidence interval narrower, consider the following examples: A small-scale population of N = two has but 1 degree of liberty for estimating the standard difference. The outcome is that a 95% CI of the SD runs from 0.45 × SD to 31.9 × SD; the factors here are as follows:

where is the p-th quantile of the chi-foursquare distribution with k degrees of freedom, and is the confidence level. This is equivalent to the following:

With k = 1, and . The reciprocals of the square roots of these two numbers give us the factors 0.45 and 31.ix given above.

A larger population of N = x has 9 degrees of freedom for estimating the standard deviation. The aforementioned computations as higher up give us in this case a 95% CI running from 0.69 × SD to ane.83 × SD. Then fifty-fifty with a sample population of x, the actual SD tin still exist well-nigh a gene two higher than the sampled SD. For a sample population N=100, this is down to 0.88 × SD to 1.16 × SD. To be more certain that the sampled SD is close to the bodily SD we need to sample a big number of points.

These same formulae can be used to obtain conviction intervals on the variance of residuals from a to the lowest degree squares fit under standard normal theory, where k is at present the number of degrees of freedom for error.

Bounds on standard deviation [edit]

For a set of N > 4 information spanning a range of values R, an upper bound on the standard deviation s is given by southward = 0.6R.[x] An guess of the standard divergence for N > 100 data taken to be approximately normal follows from the heuristic that 95% of the area under the normal bend lies roughly 2 standard deviations to either side of the mean, then that, with 95% probability the total range of values R represents four standard deviations so that s ≈ R/iv. This so-called range dominion is useful in sample size estimation, as the range of possible values is easier to gauge than the standard departure. Other divisors 1000(Due north) of the range such that south ≈ R/K(N) are available for other values of Northward and for not-normal distributions.[11]

Identities and mathematical properties [edit]

The standard deviation is invariant under changes in location, and scales directly with the calibration of the random variable. Thus, for a constant c and random variables X and Y:

The standard difference of the sum of two random variables can be related to their private standard deviations and the covariance between them:

where and stand for variance and covariance, respectively.

The calculation of the sum of squared deviations tin be related to moments calculated direct from the data. In the following formula, the alphabetic character E is interpreted to mean expected value, i.e., mean.

The sample standard deviation can be computed as:

For a finite population with equal probabilities at all points, we accept

which means that the standard deviation is equal to the foursquare root of the difference between the average of the squares of the values and the square of the boilerplate value.

See computational formula for the variance for proof, and for an analogous result for the sample standard deviation.

Interpretation and application [edit]

Example of samples from two populations with the aforementioned hateful but dissimilar standard deviations. Red population has mean 100 and SD 10; blue population has mean 100 and SD 50.

A large standard deviation indicates that the information points can spread far from the mean and a pocket-sized standard difference indicates that they are clustered closely effectually the mean.

For example, each of the three populations {0, 0, xiv, 14}, {0, half dozen, eight, xiv} and {6, 6, 8, 8} has a mean of 7. Their standard deviations are 7, five, and 1, respectively. The tertiary population has a much smaller standard divergence than the other 2 because its values are all close to vii. These standard deviations have the same units equally the data points themselves. If, for instance, the data ready {0, vi, 8, 14} represents the ages of a population of four siblings in years, the standard deviation is 5 years. As some other instance, the population {1000, 1006, 1008, 1014} may represent the distances traveled by four athletes, measured in meters. Information technology has a hateful of 1007 meters, and a standard departure of 5 meters.

Standard deviation may serve as a mensurate of doubtfulness. In physical science, for example, the reported standard departure of a group of repeated measurements gives the precision of those measurements. When deciding whether measurements agree with a theoretical prediction, the standard deviation of those measurements is of crucial importance: if the mean of the measurements is too far away from the prediction (with the distance measured in standard deviations), then the theory beingness tested probably needs to be revised. This makes sense since they fall outside the range of values that could reasonably be expected to occur, if the prediction were correct and the standard deviation appropriately quantified. See prediction interval.

While the standard departure does mensurate how far typical values tend to be from the mean, other measures are bachelor. An example is the mean absolute deviation, which might be considered a more straight measure of boilerplate altitude, compared to the root mean foursquare altitude inherent in the standard difference.

Application examples [edit]

The practical value of understanding the standard divergence of a set of values is in appreciating how much variation there is from the average (hateful).

Experiment, industrial and hypothesis testing [edit]

Standard departure is often used to compare real-world data confronting a model to test the model. For example, in industrial applications the weight of products coming off a production line may demand to comply with a legally required value. By weighing some fraction of the products an average weight can be establish, which will always be slightly different from the long-term average. By using standard deviations, a minimum and maximum value tin exist calculated that the averaged weight will be within some very high percent of the time (99.9% or more). If it falls exterior the range then the production procedure may demand to be corrected. Statistical tests such as these are particularly of import when the testing is relatively expensive. For instance, if the product needs to be opened and drained and weighed, or if the production was otherwise used upwardly by the exam.

In experimental science, a theoretical model of reality is used. Particle physics conventionally uses a standard of "5 sigma" for the declaration of a discovery. A five-sigma level translates to one chance in 3.5 one thousand thousand that a random fluctuation would yield the result. This level of certainty was required in order to assert that a particle consequent with the Higgs boson had been discovered in 2 contained experiments at CERN,[12] also leading to the annunciation of the first ascertainment of gravitational waves.[xiii]

Weather [edit]

As a simple instance, consider the average daily maximum temperatures for two cities, one inland and 1 on the coast. It is helpful to sympathise that the range of daily maximum temperatures for cities near the coast is smaller than for cities inland. Thus, while these two cities may each have the same average maximum temperature, the standard deviation of the daily maximum temperature for the coastal urban center will be less than that of the inland city as, on whatsoever item twenty-four hour period, the bodily maximum temperature is more probable to be farther from the average maximum temperature for the inland city than for the coastal one.

Finance [edit]

In finance, standard departure is often used every bit a measure of the risk associated with price-fluctuations of a given asset (stocks, bonds, property, etc.), or the risk of a portfolio of avails[14] (actively managed mutual funds, index mutual funds, or ETFs). Gamble is an of import factor in determining how to efficiently manage a portfolio of investments because information technology determines the variation in returns on the asset and/or portfolio and gives investors a mathematical basis for investment decisions (known as mean-variance optimization). The fundamental concept of risk is that equally it increases, the expected render on an investment should increase also, an increment known as the risk premium. In other words, investors should await a higher return on an investment when that investment carries a higher level of run a risk or uncertainty. When evaluating investments, investors should estimate both the expected return and the incertitude of futurity returns. Standard deviation provides a quantified estimate of the uncertainty of future returns.

For example, presume an investor had to cull between two stocks. Stock A over the past 20 years had an average return of 10 percent, with a standard deviation of twenty percentage points (pp) and Stock B, over the aforementioned menses, had average returns of 12 percent but a higher standard divergence of 30 pp. On the basis of hazard and render, an investor may decide that Stock A is the safer selection, considering Stock B'south additional ii percentage points of render is not worth the additional 10 pp standard deviation (greater risk or incertitude of the expected return). Stock B is likely to fall short of the initial investment (but also to exceed the initial investment) more often than Stock A under the same circumstances, and is estimated to render only ii per centum more on boilerplate. In this case, Stock A is expected to earn most 10 percent, plus or minus xx pp (a range of 30 pct to −10 percent), nigh two-thirds of the future twelvemonth returns. When considering more extreme possible returns or outcomes in future, an investor should look results of equally much as ten pct plus or minus 60 pp, or a range from 70 percent to −50 percent, which includes outcomes for three standard deviations from the average return (about 99.vii per centum of likely returns).

Computing the boilerplate (or arithmetics mean) of the return of a security over a given period will generate the expected return of the nugget. For each period, subtracting the expected return from the actual return results in the difference from the hateful. Squaring the difference in each period and taking the average gives the overall variance of the return of the asset. The larger the variance, the greater risk the security carries. Finding the square root of this variance will give the standard departure of the investment tool in question.

Population standard departure is used to set the width of Bollinger Bands, a technical analysis tool. For example, the upper Bollinger Band is given as The near commonly used value for n is 2; there is about a five percent chance of going outside, assuming a normal distribution of returns.

Financial time series are known to be non-stationary serial, whereas the statistical calculations higher up, such every bit standard divergence, employ just to stationary series. To utilise the above statistical tools to non-stationary series, the series beginning must be transformed to a stationary serial, enabling use of statistical tools that now have a valid basis from which to work.

Geometric interpretation [edit]

To gain some geometric insights and description, we will commencement with a population of iii values, 10 1, 10 ii, 10 3. This defines a indicate P = (ten 1, x 2, x 3) in R 3. Consider the line L = {(r, r, r) : r ∈ R}. This is the "primary diagonal" going through the origin. If our three given values were all equal, then the standard difference would be cipher and P would lie on L. So information technology is not unreasonable to assume that the standard divergence is related to the distance of P to L. That is indeed the case. To move orthogonally from Fifty to the point P, one begins at the point:

whose coordinates are the mean of the values we started out with.

| Derivation of |

|---|

| is on therefore for some . The line is to be orthogonal to the vector from to . Therefore: |

A petty algebra shows that the altitude between P and Grand (which is the same as the orthogonal distance between P and the line Fifty) is equal to the standard difference of the vector (10 1, x 2, x three), multiplied by the square root of the number of dimensions of the vector (iii in this case).

Chebyshev's inequality [edit]

An observation is rarely more than a few standard deviations away from the mean. Chebyshev's inequality ensures that, for all distributions for which the standard deviation is defined, the amount of information inside a number of standard deviations of the mean is at least equally much as given in the following table.

| Altitude from mean | Minimum population |

|---|---|

| fifty% | |

| 2σ | 75% |

| iiiσ | 89% |

| 4σ | 94% |

| 5σ | 96% |

| 6σ | 97% |

| [xv] | |

Rules for unremarkably distributed data [edit]

Dark bluish is one standard deviation on either side of the mean. For the normal distribution, this accounts for 68.27 percent of the set; while 2 standard deviations from the mean (medium and nighttime blueish) account for 95.45 pct; three standard deviations (calorie-free, medium, and dark blue) account for 99.73 percent; and four standard deviations business relationship for 99.994 per centum. The two points of the curve that are one standard divergence from the hateful are also the inflection points.

The central limit theorem states that the distribution of an boilerplate of many independent, identically distributed random variables tends toward the famous bong-shaped normal distribution with a probability density function of

where μ is the expected value of the random variables, σ equals their distribution'due south standard departure divided by northward 1/2, and n is the number of random variables. The standard deviation therefore is merely a scaling variable that adjusts how wide the curve volition exist, though it likewise appears in the normalizing constant.

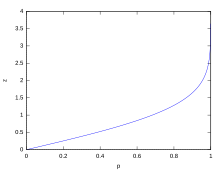

If a information distribution is approximately normal, then the proportion of data values inside z standard deviations of the hateful is defined by:

where is the error office. The proportion that is less than or equal to a number, x, is given past the cumulative distribution function:

- .[xvi]

If a data distribution is approximately normal then about 68 per centum of the information values are inside one standard deviation of the hateful (mathematically, μ ±σ, where μ is the arithmetic mean), about 95 percentage are inside ii standard deviations (μ ± 2σ), and about 99.vii percentage prevarication within three standard deviations (μ ± threeσ). This is known equally the 68–95–99.7 rule, or the empirical rule.

For diverse values of z, the percentage of values expected to lie in and exterior the symmetric interval, CI = (−zσ,zσ), are as follows:

| Confidence interval | Proportion within | Proportion without | |

|---|---|---|---|

| Per centum | Percent | Fraction | |

| 0.318639 σ | 25% | 75% | 3 / four |

| 0.674490 σ | 50% | 50% | ane / ii |

| 0.977925 σ | 66.6667% | 33.3333% | 1 / 3 |

| 0.994458 σ | 68% | 32% | 1 / 3.125 |

| 1σ | 68.2689492 % | 31.7310508 % | i / 3.1514872 |

| 1.281552 σ | 80% | 20% | 1 / 5 |

| 1.644854 σ | 90% | 10% | 1 / 10 |

| 1.959964 σ | 95% | 5% | 1 / 20 |

| iiσ | 95.4499736 % | 4.5500264 % | 1 / 21.977895 |

| 2.575829 σ | 99% | 1% | one / 100 |

| iiiσ | 99.7300204 % | 0.2699796 % | 1 / 370.398 |

| 3.290527 σ | 99.9% | 0.1% | ane / 1000 |

| three.890592 σ | 99.99% | 0.01% | ane / ten000 |

| 4σ | 99.993666 % | 0.006334 % | ane / 15787 |

| 4.417173 σ | 99.999% | 0.001% | 1 / 100000 |

| 4.5 σ | 99.999320 465 3751% | 0.000679 534 6249% | one / 147159.5358 6.8 / ane000 000 |

| iv.891638 σ | 99.9999% | 0.0001% | ane / 1000 000 |

| fiveσ | 99.999942 6697 % | 0.000057 3303 % | 1 / 1744 278 |

| 5.326724 σ | 99.99999 % | 0.00001 % | 1 / x000 000 |

| 5.730729 σ | 99.999999 % | 0.000001 % | 1 / 100000 000 |

| 6 σ | 99.999999 8027 % | 0.000000 1973 % | 1 / 506797 346 |

| half dozen.109410 σ | 99.9999999 % | 0.0000001 % | 1 / one000 000 000 |

| 6.466951 σ | 99.999999 99 % | 0.000000 01 % | 1 / 10000 000 000 |

| 6.806502 σ | 99.999999 999 % | 0.000000 001 % | 1 / 100000 000 000 |

| 7σ | 99.999999 999 7440% | 0.000000 000 256 % | one / 390682 215 445 |

Relationship between standard departure and mean [edit]

The mean and the standard difference of a gear up of data are descriptive statistics ordinarily reported together. In a certain sense, the standard deviation is a "natural" measure of statistical dispersion if the center of the data is measured about the mean. This is because the standard deviation from the mean is smaller than from any other point. The precise statement is the following: suppose x ane, ..., 10 n are existent numbers and ascertain the function:

Using calculus or past completing the foursquare, it is possible to testify that σ(r) has a unique minimum at the mean:

Variability can also be measured past the coefficient of variation, which is the ratio of the standard difference to the mean. It is a dimensionless number.

Standard departure of the mean [edit]

Often, we want some information about the precision of the mean we obtained. We can obtain this by determining the standard departure of the sampled mean. Bold statistical independence of the values in the sample, the standard deviation of the mean is related to the standard deviation of the distribution past:

where N is the number of observations in the sample used to approximate the mean. This can hands be proven with (come across basic properties of the variance):

(Statistical independence is causeless.)

hence

Resulting in:

In order to estimate the standard deviation of the mean information technology is necessary to know the standard deviation of the unabridged population beforehand. However, in most applications this parameter is unknown. For example, if a series of 10 measurements of a previously unknown quantity is performed in a laboratory, it is possible to summate the resulting sample mean and sample standard difference, but it is impossible to calculate the standard deviation of the mean. However, ane tin estimate the standard deviation of the entire population from the sample, and thus obtain an estimate for the standard error of the hateful.

Rapid adding methods [edit]

The post-obit two formulas can represent a running (repeatedly updated) standard deviation. A set of 2 power sums s 1 and due south 2 are computed over a fix of N values of x, denoted as x 1, ..., x N :

Given the results of these running summations, the values North, south 1, due south two tin exist used at any time to compute the current value of the running standard deviation:

Where Northward, as mentioned in a higher place, is the size of the fix of values (or tin besides be regarded as due south 0).

Similarly for sample standard deviation,

In a computer implementation, every bit the two s j sums become large, nosotros need to consider round-off error, arithmetic overflow, and arithmetics underflow. The method below calculates the running sums method with reduced rounding errors.[17] This is a "one pass" algorithm for calculating variance of n samples without the need to store prior information during the adding. Applying this method to a time series will effect in successive values of standard deviation corresponding to n data points as n grows larger with each new sample, rather than a constant-width sliding window calculation.

For thou = 1, ..., n:

where A is the hateful value.

Note: since or

Sample variance:

Population variance:

Weighted calculation [edit]

When the values xi are weighted with unequal weights wi , the ability sums s 0, s 1, s 2 are each computed as:

And the standard difference equations remain unchanged. s 0 is now the sum of the weights and not the number of samples North.

The incremental method with reduced rounding errors tin likewise be applied, with some additional complexity.

A running sum of weights must be computed for each k from 1 to n:

and places where 1/north is used above must be replaced by wi /Wnorthward :

In the final segmentation,

and

or

where due north is the total number of elements, and n' is the number of elements with not-nada weights.

The in a higher place formulas become equal to the simpler formulas given above if weights are taken as equal to one.

History [edit]

The term standard difference was offset used in writing by Karl Pearson in 1894, following his use of it in lectures.[xviii] [xix] This was as a replacement for earlier alternative names for the same thought: for case, Gauss used mean error.[twenty]

College dimensions [edit]

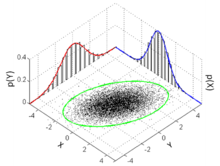

The standard deviation ellipse (light-green) of a ii-dimensional normal distribution

In two dimensions, the standard deviation can be illustrated with the standard deviation ellipse (see Multivariate normal distribution § Geometric interpretation).

Meet also [edit]

- 68–95–99.7 rule

- Accuracy and precision

- Chebyshev'due south inequality An inequality on location and scale parameters

- Coefficient of variation

- Cumulant

- Difference (statistics)

- Distance correlation Distance standard deviation

- Error bar

- Geometric standard deviation

- Mahalanobis distance generalizing number of standard deviations to the mean

- Hateful absolute error

- Pooled variance

- Propagation of dubiety

- Percentile

- Raw data

- Robust standard departure

- Root mean square

- Sample size

- Samuelson'due south inequality

- Half-dozen Sigma

- Standard error

- Standard score

- Yamartino method for calculating standard departure of wind direction

References [edit]

- ^ Banal, J.M.; Altman, D.G. (1996). "Statistics notes: measurement fault". BMJ. 312 (7047): 1654. doi:10.1136/bmj.312.7047.1654. PMC2351401. PMID 8664723.

- ^ Gauss, Carl Friedrich (1816). "Bestimmung der Genauigkeit der Beobachtungen". Zeitschrift für Astronomie und Verwandte Wissenschaften. ane: 187–197.

- ^ Walker, Helen (1931). Studies in the History of the Statistical Method. Baltimore, MD: Williams & Wilkins Co. pp. 24–25.

- ^ Weisstein, Eric W. "Bessel's Correction". MathWorld.

- ^ "Standard Divergence Formulas". www.mathsisfun.com . Retrieved 21 August 2020.

- ^ Weisstein, Eric W. "Standard Divergence". mathworld.wolfram.com . Retrieved 21 August 2020.

- ^ "Consequent estimator". www.statlect.com . Retrieved ten October 2022.

- ^ Gurland, John; Tripathi, Ram C. (1971), "A Unproblematic Approximation for Unbiased Interpretation of the Standard Deviation", The American Statistician, 25 (4): 30–32, doi:10.2307/2682923, JSTOR 2682923

- ^ "Standard Deviation Calculator". PureCalculators. xi July 2021. Retrieved 14 September 2021.

- ^ Shiffler, Ronald E.; Harsha, Phillip D. (1980). "Upper and Lower Premises for the Sample Standard Deviation". Teaching Statistics. 2 (3): 84–86. doi:x.1111/j.1467-9639.1980.tb00398.x.

- ^ Browne, Richard H. (2001). "Using the Sample Range as a Basis for Calculating Sample Size in Power Calculations". The American Statistician. 55 (four): 293–298. doi:10.1198/000313001753272420. JSTOR 2685690. S2CID 122328846.

- ^ "CERN experiments observe particle consistent with long-sought Higgs boson | CERN press function". Press.spider web.cern.ch. 4 July 2012. Archived from the original on 25 March 2016. Retrieved 30 May 2015.

- ^ LIGO Scientific Collaboration, Virgo Collaboration (2016), "Observation of Gravitational Waves from a Binary Black Hole Merger", Physical Review Letters, 116 (half-dozen): 061102, arXiv:1602.03837, Bibcode:2016PhRvL.116f1102A, doi:10.1103/PhysRevLett.116.061102, PMID 26918975, S2CID 124959784

- ^ "What is Standard Departure". Pristine. Retrieved 29 Oct 2011.

- ^ Ghahramani, Saeed (2000). Fundamentals of Probability (2nd ed.). New Bailiwick of jersey: Prentice Hall. p. 438. ISBN9780130113290.

- ^ Eric West. Weisstein. "Distribution Function". MathWorld—A Wolfram Spider web Resource. Retrieved 30 September 2014.

- ^ Welford, BP (August 1962). "Note on a Method for Computing Corrected Sums of Squares and Products". Technometrics. 4 (iii): 419–420. CiteSeerX10.1.1.302.7503. doi:10.1080/00401706.1962.10490022.

- ^ Contrivance, Yadolah (2003). The Oxford Dictionary of Statistical Terms . Oxford University Press. ISBN978-0-19-920613-ane.

- ^ Pearson, Karl (1894). "On the dissection of asymmetrical frequency curves". Philosophical Transactions of the Imperial Guild A. 185: 71–110. Bibcode:1894RSPTA.185...71P. doi:10.1098/rsta.1894.0003.

- ^ Miller, Jeff. "Primeval Known Uses of Some of the Words of Mathematics".

External links [edit]

- "Quadratic deviation", Encyclopedia of Mathematics, Ems Press, 2001 [1994]

- "Standard Deviation Calculator"

Percentage Within 1 Standard Deviation,

Source: https://en.wikipedia.org/wiki/Standard_deviation

Posted by: adamswaaked.blogspot.com

![{\displaystyle \mu \equiv \operatorname {E} [X]=\int _{-\infty }^{+\infty }xf(x)\,\mathrm {d} x}](https://wikimedia.org/api/rest_v1/media/math/render/svg/fb2a61843da0d05619c0dd691dbf3fe315b395ad)

![{\displaystyle \sigma \equiv {\sqrt {\operatorname {E} \left[(X-\mu )^{2}\right]}}={\sqrt {\int _{-\infty }^{+\infty }(x-\mu )^{2}f(x)\,\mathrm {d} x}},}](https://wikimedia.org/api/rest_v1/media/math/render/svg/e3a1cfef8ad100fbcae387d9581763f0b389bbc3)

![{\textstyle {\sqrt {\operatorname {E} \left[X^{2}\right]-(\operatorname {E} [X])^{2}}}.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/e2dd8d466c3ecb05713377fefcb7e7f787b29ce7)

![{\displaystyle \alpha \in (1,2]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/782b1d598278b0238ee817c658744e8a7ed3a06e)

![{\displaystyle \sigma ={\sqrt {{\frac {1}{N}}\left[(x_{1}-\mu )^{2}+(x_{2}-\mu )^{2}+\cdots +(x_{N}-\mu )^{2}\right]}},{\text{ where }}\mu ={\frac {1}{N}}(x_{1}+\cdots +x_{N}),}](https://wikimedia.org/api/rest_v1/media/math/render/svg/827beb1be760eed3cb07b20d29f01d326f728071)

![{\displaystyle E[{\sqrt {X}}]\neq {\sqrt {E[X]}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/79fa5d2ba8891a41598ddeadc10ba844a20cdfa0)

![{\displaystyle \sigma (X)={\sqrt {\operatorname {E} \left[(X-\operatorname {E} [X])^{2}\right]}}={\sqrt {\operatorname {E} \left[X^{2}\right]-(\operatorname {E} [X])^{2}}}.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/d3ab12089bd2027790ef060ff7cc2ec05ae2021f)

![{\displaystyle s(X)={\sqrt {\frac {N}{N-1}}}{\sqrt {\operatorname {E} \left[(X-\operatorname {E} [X])^{2}\right]}}.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/702e9da21c721697e6e81932bf8b7443028f7d6d)

\cdot (x_{1}-\ell ,x_{2}-\ell ,x_{3}-\ell )&=0\\[4pt]r(x_{1}-\ell +x_{2}-\ell +x_{3}-\ell )&=0\\[4pt]r\left(\sum _{i}x_{i}-3\ell \right)&=0\\[4pt]\sum _{i}x_{i}-3\ell &=0\\[4pt]{\frac {1}{3}}\sum _{i}x_{i}&=\ell \\[4pt]{\bar {x}}&=\ell \end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/51526a39caa45834866ae2dc4bb3ed262ba7fbe0)

![{\displaystyle {\text{Proportion}}\leq x={\frac {1}{2}}\left[1+\operatorname {erf} \left({\frac {x-\mu }{\sigma {\sqrt {2}}}}\right)\right]={\frac {1}{2}}\left[1+\operatorname {erf} \left({\frac {z}{\sqrt {2}}}\right)\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3907d1b0502235fa3fd00f261b290406a02e7b21)

0 Response to "Percentage Within 1 Standard Deviation"

Post a Comment